Note: This is to the specification only.

Lexical Analysis

Lexer → Lexenes → Tokens → Token Stream → Symbol Table

Lexens

Lexens are an intermediary stage between the source and tokens. It is effectively a segment waiting to be tokenised.

Symbol Table

A symbol table stores all identifiers (variables, constants, and subroutines) used in the source code along with their attributes, such as data type, scope, and memory address.

Evaluation

It is used throughout the compilation process:

- Performance: It uses hashing (not simple indexing) to allow the compiler to find identifier details almost instantly.

- Relationship to Tokens: It is populated during Lexical Analysis at the same time the token stream is generated.

- Validation: The syntax and semantic analysers use the table to find errors, such as undeclared variables or type mismatches.

Syntax Analysis

Syntax Diagram → Abstract Syntax Tree → update Symbol Table (missing data)

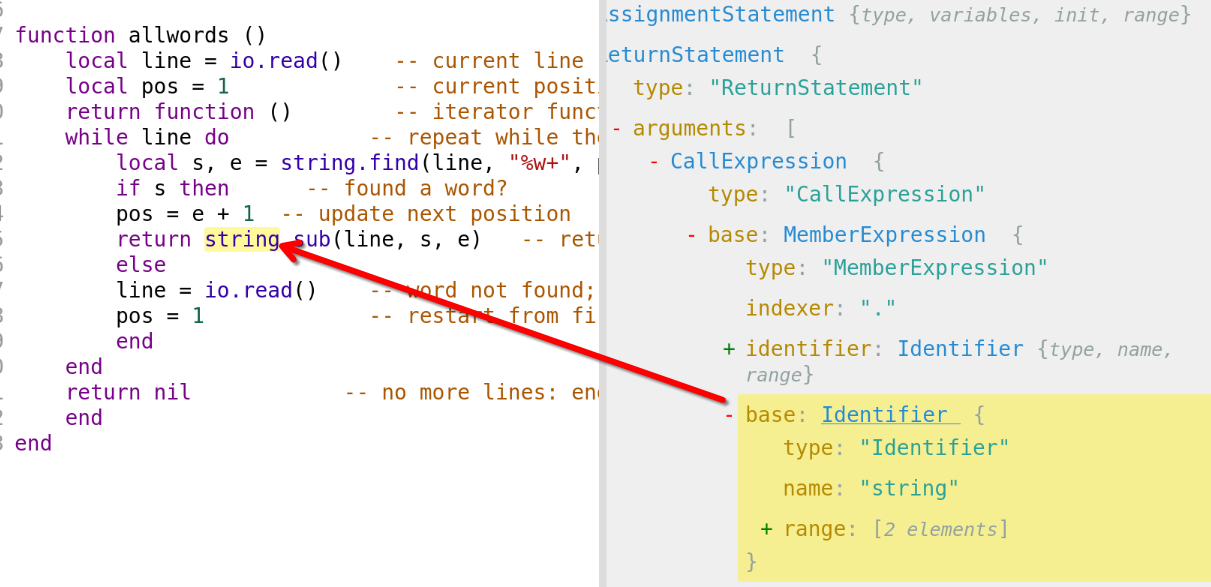

Abstract Syntax Tree (AST)

A tree with an outlined set of possible routes & values which abstractly represents the syntax, generated from the tokens.

Code Generation and Optimisation

During code generation, the tokens are transformed into object code. They may be optimised prior or throughout (depending on the compiler, some may do both).

Optimisation Example

Rules:

- removal of redundant operations whilst producing object code that achieves the same effect as the source program

- removal of unused subroutines

- removal of unused variables and constants

my_old_num = 6 # unused var

def multiply(a, b): # unused subroutine

return a * b

my_num = 7

mult = int(input("Multiplier: "))

my_num = 7 # redundant operation

print(mult * my_num)would then become (representation):

# removed unused var

# removed unused subroutine

my_num = 7

mult = int(input("Multiplier: "))

# removed reundant operation

print(mult * my_num)